PythonGateway  Issue Detected

Issue Detected

0

0 3

3

What's new in this version

jupyter support, fast transfer

PythonGateway

Python Gateway for InterSystems Data Platforms. Execute Python code and more from InterSystems IRIS.

This projects brings you the power of Python right into your InterSystems IRIS environment:

- Execute arbitrary Python code

- Seamlessly transfer data from InterSystems IRIS into Python

- Build intelligent Interoperability business processes with Python Interoperability Adapter

- Save, examine, modify and restore Python context from InterSystems IRIS

Important notice

This project is a community supported bridge between InterSystems IRIS and Python. Starting with 2020.3 InterSystems IRIS provides out-of-the-box Python Gateway implementation, officially supported by InterSystems. Documentation is available here.

ML Toolkit user group

ML Toolkit user group is a private GitHub repository set up as part of InterSystems corporate GitHub organization. It is addressed to the external users that are installing, learning or are already using ML Toolkit components. To join ML Toolkit user group, please send a short e-mail at the following address: MLToolkit@intersystems.com and indicate in your e-mail the following details (needed for the group members to get to know and identify you during discussions):

- GitHub username

- Full Name (your first name followed by your last name in Latin script)

- Organization (you are working for, or you study at, or your home office)

- Position (your actual position in your organization, or “Student”, or “Independent”)

- Country (you are based in)

Installation

- Install Python 3.6.7 64 bit (other Python versions will not work due to ABI incompatibility).

- Install

dillmodule:pip install dill(required for context harvesting) - Download latest PythonGateway release and unpack it.

- From the InterSystems IRIS terminal, load ObjectScript code. To do that execute:

do $system.OBJ.ImportDir("/path/to/unpacked/pythongateway","*.cls","c",,1)) in Production (Ensemble-enabled) namespace. In case you want to Production-enable namespace call:write ##class(%EnsembleMgr).EnableNamespace($Namespace, 1). - Place callout DLL/SO/DYLIB in the

binfolder of your InterSystems IRIS installation. Library file should be placed into a path returned bywrite ##class(isc.py.Callout).GetLib().

Windows

- Check that your

PYTHONHOMEenvironment variable points to Python 3.6.7. - Check that your SYSTEM

PATHenvironment variable has:

%PYTHONHOME%variable (or directory it points to)%PYTHONHOME%\Scriptsdirectory

- In the InterSystems IRIS Terminal, run:

write $SYSTEM.Util.GetEnviron("PYTHONHOME")and verify it prints out the directory of Python installationwrite $SYSTEM.Util.GetEnviron("PATH")and verify it prints out the directory of Python installation andScriptsfolder inside Python installation.

Linux

- Check that your SYSTEM

PATHenvironment variable has/usr/liband/usr/lib/x86_64-linux-gnu, preferably at the beginning. Use/etc/environmentfile to set environment variables. - In cause of errors check Troubleshooting section

undefined symbol: _Py_TrueStructand specify PythonLib property.

Mac

- Only python 3.6.7 from Python.org. is currently supported. Check

PATHvariable.

If you modified environment variables restart your InterSystems product.

Docker

- To build docker image:

- Copy

iscpython.sointo repository root (if it’s not there already) - Execute in the repository root

docker build --force-rm --tag intersystemsdc/irispy:latest .By default the image is built uponstore/intersystems/iris-community:2019.4.0.383.0image, however you can change that by providingIMAGEvariable. To build from InterSystems IRIS Community Edition execute:docker build --build-arg IMAGE=store/intersystems/iris-community:2019.4.0.383.0 --force-rm --tag intersystemsdc/irispy:latest .

- To run docker image execute (key is not needed for Community based images):

docker run -d \

-p 52773:52773 \

-v /<HOST-DIR-WITH-iris.key>/:/mount \

--name irispy \

intersystemsdc/irispy:latest \

--key /mount/iris.key \

- Test process

isc.py.test.Processsaves image artifact into temp directory. You might want to change that path to a mounted directory. To do that edit annotation forCorrelation Matrix: Graphcall, specifying valid filepath forf.savefigfunction. - For terminal access execute:

docker exec -it irispy sh. - Access SMP with SuperUser/SYS or Admin/SYS user/password.

- To stop container execute:

docker stop irispy && docker rm --force irispy.

Docker wIth GPU support

After building a normal docker container, execute: docker build -t intersystemsdc/irispy:latest-gpu -f Dockerfile-GPU .

Run this container with: --gpus all flag:

docker run -d \

--gpus all \

-p 52773:52773 \

-v /<HOST-DIR-WITH-iris.key>/:/mount \

--name irispy \

intersystemsdc/irispy:latest-gpu \

--key /mount/iris.key \

Requires installed NVIDIA Container Toolkit on Docker host.

Use

- Call:

set sc = ##class(isc.py.Callout).Setup()once per systems start (add to ZSTART: docs, sample routine available inrtnfolder). - Call main method (can be called many times, context persists):

write ##class(isc.py.Main).SimpleString(code, variable, , .result) - Call:

set sc = ##class(isc.py.Callout).Finalize()to free Python context. - Call:

set sc = ##class(isc.py.Callout).Unload()to free callout library.

set sc = ##class(isc.py.Callout).Setup()

set sc = ##class(isc.py.Main).SimpleString("x='HELLO'", "x", , .x)

write x

set sc = ##class(isc.py.Callout).Finalize()

set sc = ##class(isc.py.Callout).Unload()

Terminal API

Generally the main interface to Python is isc.py.Main. It offers these methods (all return %Status), which can be separated into three categories:

- Code execution

- Data transfer

- Auxiliary

Code execution

These methods allow execution of arbitrary Python code:

ImportModule(module, .imported, .alias)- import module with alias.SimpleString(code, returnVariable, serialization, .result)- for cases where both code and variable are strings.ExecuteCode(code, variable)- executecode(it may be a stream or string), optionally set result intovariable.ExecuteFunction(function, positionalArguments, keywordArguments, variable, serialization, .result)- execute Python function or method, write result into Pyhtonvariable, return chosen serialization inresult.ExecuteFunctionArgs(function, variable, serialization, .result, args...)- execute Python function or method, write result into Pyhtonvariable, return chosen serialization inresult. BuildspositionalArgumentsandkeywordArgumentsand passes them toExecuteFunction. It’s recommended to useExecuteFunction. More information in Gateway docs.

Data Transfer

Transfer data into and from Python.

Python -> InterSystems IRIS

GetVariable(variable, serialization, .stream, useString)- getserializationofvariableinstream. IfuseStringis 1 and variable serialization can fit into string then string is returned instead of the stream.GetVariableJson(variable, .stream, useString)- get JSON serialization of variable.GetVariablePickle(variable, .stream, useString, useDill)- get Pickle (or Dill) serialization of variable.

InterSystems IRIS -> Python

ExecuteQuery(query, variable, type, namespace)- create resultset (pandasdataframeorlist) from sql query and set it intovariable.isc.pypackage must be available innamespace.ExecuteGlobal(global, variable, type, start, end, mask, labels, namespace)- transferglobaldata (fromstarttoend) to Python variable oftype:listof tuples or pandasdataframe. Formaskandlabelsarguments specification check class docs and Data Transfer docs.ExecuteClass(class, variable, type, start, end, properties, namespace)- transfer class data to Python list of tuples or pandas dataframe.properties- comma-separated list of properties to form dataframe from. * and ? wildcards are supported. Defaults to * (all properties). %%CLASSNAME property is ignored. Only stored properties can be used.ExecuteTable(table, variable, type, start, end, properties, namespace)- transfer table data to Python list of tuples or pandas dataframe.

ExecuteQuery is universal (any valid SQL query would be transfered into Python). ExecuteGlobal and its wrappers ExecuteClass and ExecuteTable, however, operate with a number of limitations. But they are much faster (3-5 times faster than ODBC driver and 20 times faster than ExecuteQuery). More information in Data Transfer docs.

Auxiliary

Support methods.

GetVariableInfo(variable, serialization, .defined, .type, .length)- get info about variable: is it defined, type and serialized length.GetVariableDefined(variable, .defined)- is variable defined.GetVariableType(variable, .type)- get variable FQCN.GetStatus()- returns last occurred exception in Python and clears it.GetModuleInfo(module, .imported, .alias)- get module alias and is it currently imported.GetFunctionInfo(function, .defined, .type, .docs, .signature, .arguments)- get function information.

Possible Serializations:

##class(isc.py.Callout).SerializationStr- Serialization by str() function##class(isc.py.Callout).SerializationRepr- Serialization by repr() function

Shell

To open Python shell: do ##class(isc.py.util.Shell).Shell(). To exit press enter.

Context persistence

Python context can be persisted into InterSystems IRIS and restored later on. There are currently three public functions:

- Save context:

set sc = ##class(isc.py.data.Context).SaveContext(.context, maxLength, mask, verbose)wheremaxLength- maximum length of saved variable. If variable serialization is longer than that, it would be ignored. Set to 0 to get them all,mask- comma separated list of variables to save (special symbols * and ? are recognized),verbosespecifies displaying context after saving, andcontextis a resulting Python context. Get context id withcontext.%Id() - Display context:

do ##class(isc.py.data.Context).DisplayContext(id)whereidis an id of a stored context. Leave empty to display current context. - Restore context:

do ##class(isc.py.data.Context).RestoreContext(id, verbose, clear)whereclearkills currently loaded context if set to 1.

Context is saved into isc.py.data package and can be viewed/edited by SQL and object methods. Currently modules, functions and variables are saved.

Interoperability adapter

Interoperability adapter isc.py.ens.Operation offers ability to interact with Python process from Interoperability productions. Currently five requests are supported:

- Execute Python code via

isc.py.msg.ExecutionRequest. Returnsisc.py.msg.ExecutionResponsewith requested variable values - Execute Python code via

isc.py.msg.StreamExecutionRequest. Returnsisc.py.msg.StreamExecutionResponsewith requested variable values. Same as above, but accepts and returns streams instead of strings. - Set dataset from SQL Query with

isc.py.msg.QueryRequest. ReturnsEns.Response. - Set dataset faster from Global/Class/Table with

isc.py.msg.GlobalRequest/isc.py.msg.ClassRequest/isc.py.msg.TableRequest. ReturnsEns.Response. - Save Python context via

isc.py.msg.SaveRequest. ReturnsEns.StringResponsewith context id. - Restore Python context via

isc.py.msg.RestoreRequest.

Check request/response classes documentation for details.

Settings:

Initializer- select a class implementingisc.py.init.Abstract. It can be used to load functions, modules, classes and so on. It would be executed at process start.PythonLib- (Linux only) if you see loading errors set it tolibpython3.6m.soor even to a full path to the shared library.

Note: isc.py.util.BPEmulator class is added to allow easy testing of Python Interoperability business processes. It can execute business process (python parts) in a current job.

Variable substitution

All business processes inheriting from isc.py.ens.ProcessUtils can use GetAnnotation(name) method to get value of activity annotation by activity name. Activity annotation can contain variables which would be calculated on ObjectScript side before being passed to Python. This is the syntax for variable substitution:

${class:method:arg1:...:argN}- execute method#{expr}- execute ObjectScript code

Check test isc.py.test.Process business process for example in Correlation Matrix: Graph activity: f.savefig(r'#{process.WorkDirectory}SHOWCASE${%PopulateUtils:Integer:1:100}.png')

In this example:

#{process.WorkDirectory}returns WorkDirectory property ofprocessobject which is an instance ofisc.py.test.Processclass and current business process.${%PopulateUtils:Integer:1:100}callsIntegermethod of%PopulateUtilsclass passing arguments1and100, returning random integer in range1...100.

Test Business Process

Along with callout code and Interoperability adapter there’s also a test Interoperability Production and test Business Process. To use them:

- In OS bash execute

pip install pandas matplotlib seaborn. - Execute:

do ##class(isc.py.test.CannibalizationData).Import()to populate test data. - Start

isc.py.test.Productionproduction. - Send empty

Ens.Requestmessage to theisc.py.test.Process.

Notes

- If you want to use

ODBCconnection, on Windows install pyodbc:pip install pyodbc, on Linux install:apt-get install unixodbc unixodbc-dev python-pyodbc. - If you want to use

JDBCconnection, install JayDeBeApi:pip install JayDeBeApi. On Linux you might need to install:apt-get install python-aptbeforehand. - If you get errors similar to

undefined symbol: _Py_TrueStructinisc.py.ens.Operationoperation set settingPythonLibtolibpython3.6m.soor even to a full path of the shared library. - In test Business Process

isc.py.test.Processedit annotation forODBCorJDBCcalls, specifying correct connection string. - In production, for the sample business process

isc.py.test.ProcesssetConnectionTypesetting to a preferred connection type (defaults to RAW, change only if you need to test xDBC connectivity).

Unit tests

To run tests execute:

set repo = ##class(%SourceControl.Git.Utils).TempFolder()

set ^UnitTestRoot = ##class(%File).SubDirectoryName(##class(%File).SubDirectoryName(##class(%File).SubDirectoryName(repo,"isc"),"py"),"unit",1)

set sc = ##class(%UnitTest.Manager).RunTest(,"/nodelete")

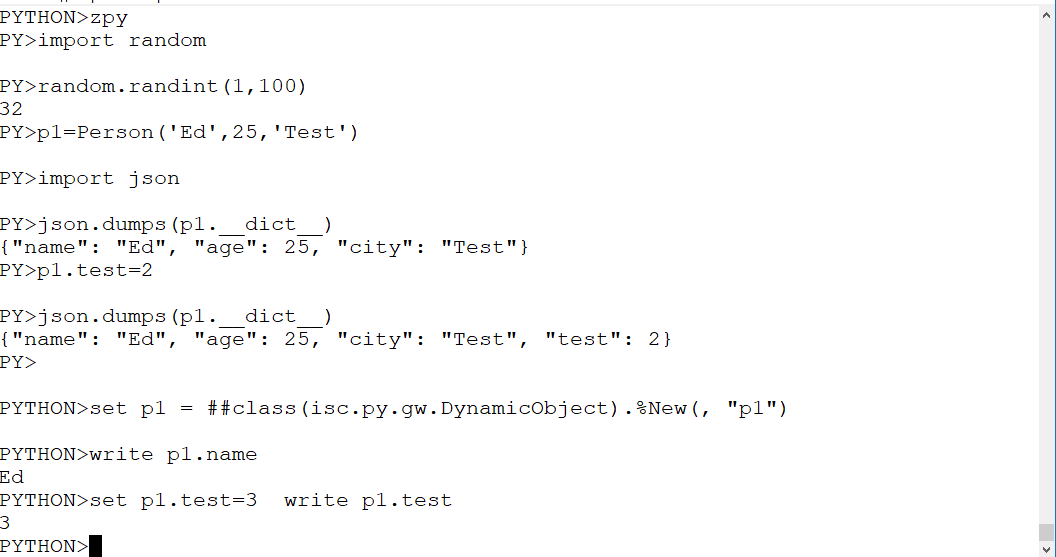

ZLANGC00

Install ZLANG routine from rtn folder to add zpy command:

zpy "import random"

zpy "x=random.random()"

zpy "x"

>0.4157151243124494

Argumentless zpy command opens python shell.

Jupyter

Check https://github.com/intersystems-community/PythonGateway/blob/master/jupyter folder for details on how to run Jupyter with PythonGateway.

Limitations

There are several limitations associated with the use of PythonAdapter.

- Modules reinitialization. Some modules may only be loaded once during process lifetime (i.e. numpy). While Finalization clears the context of the process, repeated load of such libraries terminates the process. Discussions: 1, 2.

- Variables. Do not use these variables:

zzz*variables. Please report any leakage of these variables. System code should always clear them. - Functions Do not redefine

zzz*()functions. - Context persistence. Only pickled/dill variables could be restored correctly. Module imports are supported.

Development

Development of ObjectScript is done via cache-tort-git in UDL mode.

Development of C code is done in Eclipse.

Commits

Commits should follow the pattern: moule: description issue. List of modules:

- Callout - C and ObjectScript callout interface in

isc.py.Callout. - API - terminal API, mainly

isc.py.Main. - Gateway - proxy classes generation.

- Proxyless Gateway -

isc.py.gw.DynamicObjectclass. - Interoperability - support utilities for Interoperability Business Processes.

- Tests - unit tests and test production.

- Docker - containers.

- Docs - documentation.

Building

Windows

- Install MinGW-w64 you’ll need

makeandgcc. - Rename

mingw32-make.exetomake.exeinmingw64\bindirectory. - Set

GLOBALS_HOMEenvironment variable to the root of Caché or Ensemble installation. - Set

PYTHONHOMEenvironment variable to the root of Python3 installation. UsuallyC:\Users\<User>\AppData\Local\Programs\Python\Python3<X> - Open MinGW bash (

mingw64env.cmdormingw-w64.bat). - In

<Repository>\c\executemake.

Linux

It’s recommended to use Linux OS which uses python3 by default, i.e. Ubuntu 18.04.1 LTS. Skip steps 1 and maybe even 2 if your OS has python 3.6 as default python (python3 --version or python --version or python3.6 --version).

- Add Python 3.6 repo:

add-apt-repository ppa:jonathonf/python-3.6andapt-get update - Install:

apt install python3.6 python3.6-dev libpython3.6-dev build-essential - Set

GLOBALS_HOMEenvironment variable to the root of Caché or Ensemble installation. - Set environment variable

PYTHONVERto the python version you want to build, i.e.:export PYTHONVER=3.6 - In

<Repository>/c/executemake.

Mac OS X

- Install Python 3.6 and gcc compiler.

- Set

GLOBALS_HOMEenvironment variable to the root of Caché or Ensemble installation. - Set environment variable

PYTHONVERto the python version you want to build, i.e.:export PYTHONVER=3.6 - In

<Repository>/c/execute:

gcc -Wall -Wextra -fpic -O3 -fno-strict-aliasing -Wno-unused-parameter -I/Library/Frameworks/Python.framework/Versions/${PYTHONVER}/Headers -I${GLOBALS_HOME}/dev/iris-callin/include -c -o iscpython.o iscpython.c

gcc -dynamiclib -L/Library/Frameworks/Python.framework/Versions/${PYTHONVER}/lib -L/usr/lib -lpython${PYTHONVER}m -lpthread -ldl -lutil -lm -Xlinker iscpython.o -o iscpython.dylib

If you have a Mac please update makefile so we can build Mac version via Make.

Troubleshooting

<DYNAMIC LIBRARY LOAD> exception

- Check that OS has correct python installed. Open python, execute this script:

import sys

sys.version

The result should contain: Python 3.6.7 and 64 bit. If it’s not, install Python 3.6.7 64 bit.

-

Check OS-specific installation steps. Make sure that path relevant for InterSystems IRIS (usually system) contains Python installation.

-

Make sure that InterSystems IRIS can access Python installation.

-

If you use PyEnv you need to install Python 3.6.7. with shared library support:

env PYTHON_CONFIGURE_OPTS="--enable-shared" pyenv install 3.6.7

pyenv global 3.6.7

- To change

LD_LIBRARY_PATHvariable set LibPath config property and restart InterSystems IRIS. Note that a folder name in the LibPath MUST be without trailing slash, for example:/usr/local/lib. Also some notes on Python compilation. GetLD_LIBRARY_PATHvalue after restart with:w $system.Util.GetEnviron("LD_LIBRARY_PATH").

Module not found error

Sometimes you can get module not found error. Here’s how to fix it. Each step constitutes a complete solution so restart IRIS and check that the problem is fixed.

- Check that OS bash and IRIS use the same python. Open python, execute this script from both, they should be the same.

import sys

ver=sys.version

ver

If they are not the same search for a Python executable that is actually used by InterSystems IRIS.

- Check that module is, in fact, installed. Open OS bash, execute

python(maybepython3orpython36on Linux) and inside opened python bash executeimport <module>. If it fails with some error run in OS bashpip install <module>. Note that module name for import and module name for pip could be different. - If you’re sure that module is installed, compare paths used by python (it’s not system path). Get path with:

import sys

path=sys.path

path

They should be the same. If they are not the same read how PYTHONPATH (python) is formed here and adjust your OS environment to form pythonpath (python) correctly, i.e. set PYTHONPATH (system) env var to C:\Users\<USER>\AppData\Roaming\Python\Python36\site-packages or other directories where your modules reside (and other missing directories).

- Compare python paths again and they are not the same or the problem persists add missing paths explicitly to the

isc.py.ens.Operationinit code (for interoperability) and on process start (for Callout wrapper):

do ##class(isc.py.Main).SimpleString("import sys")

do ##class(isc.py.Main).SimpleString("sys.path.append('C:\\Users\\<USER>\\AppData\\Roaming\\Python\\Python36\\site-packages')")

undefined symbol: _Py_TrueStruct or similar errors

- Check

ldconfigand adjust it to point to the directory with Python shared library. - If it fails:

- For interoperability in

isc.py.ens.Operationoperation set settingPythonLibtolibpython3.6m.soor even to a full path of the shared library. - For Callout wrapper on process start call

do ##class(isc.py.Callout).Initialize("libpython3.6m.so")alternatively pass a full path of the shared library.

- For interoperability in

PyODBC on Linux and Mac

- Install unixodbc:

apt-get install unixodbc-dev - Install PyODBC:

pip install pyodbc - Set connection string:

cnxn=pyodbc.connect(('Driver=/<IRIS directory>/bin/libirisodbcu35.so;Server=localhost;Port=51773;database=USER;UID=_SYSTEM;PWD=SYS'),autocommit=True)

Some notes. Call set sc = ##class(isc.py.util.Installer).ConfigureTestProcess(user, pass, host, port, namespace) to configure test process automatically.