© 2026 InterSystems Corporation, Cambridge, MA. All rights reserved.Privacy & TermsGuaranteeSection 508Contest Terms

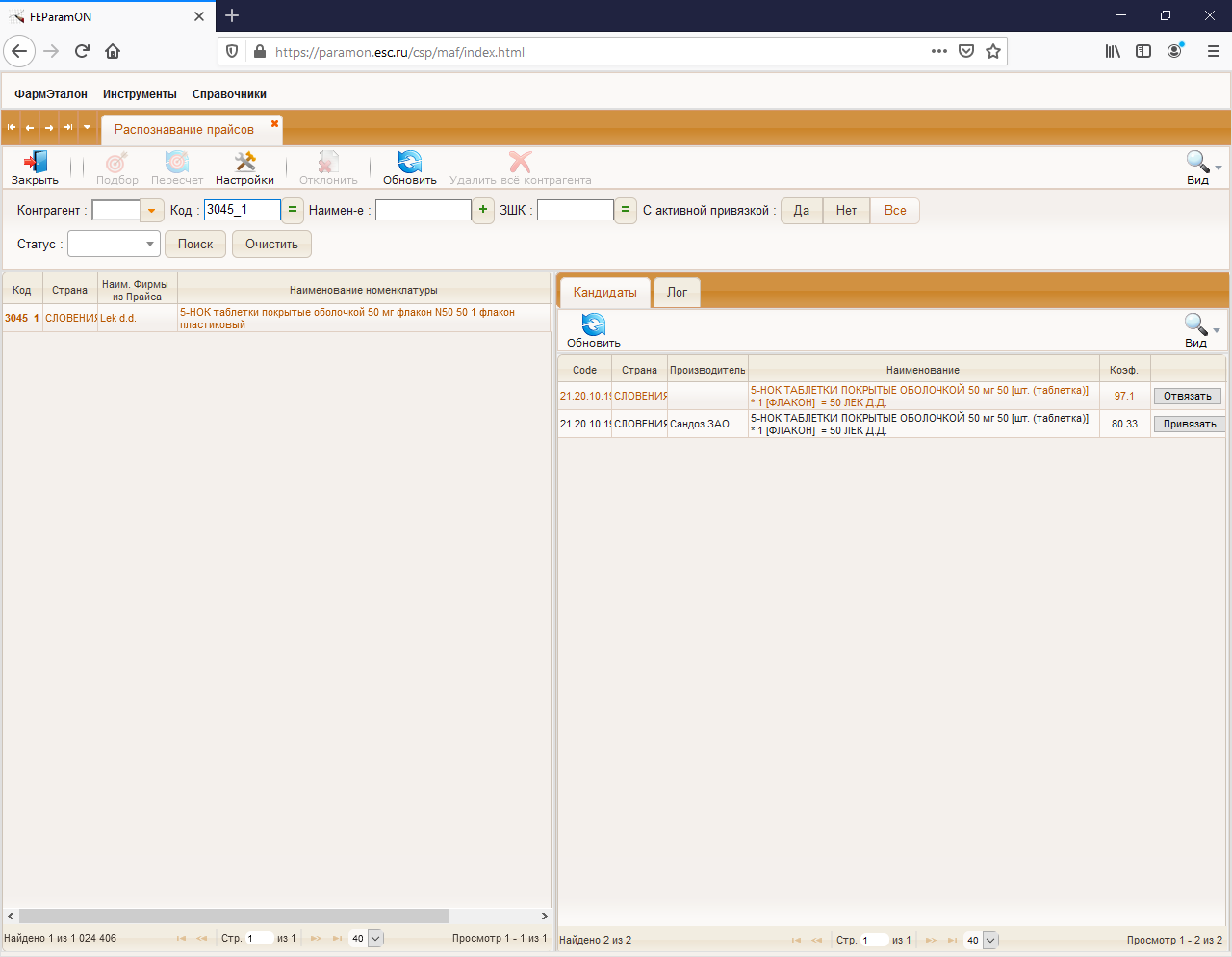

ESKLP  Issue Detected

Issue Detected

4.5

1 reviews

2

Awards

872

Views

0

IPM installs

0

0 4

4

Details

Releases (6)

Reviews (1)

Awards (2)

Issues

Videos (1)

Made with

Version

1.0.523 Oct, 2021

Ideas portal

Category

Works with

InterSystems IRISFirst published

12 Jul, 2020Last edited

04 Jun, 2022Last checked by moderator

14 Nov, 2025Doesn't work